A Field Guide to NanoGPT Speedrun Optimizations

Near the end of 2022, Andrej Karpathy released NanoGPT1, a small, hackable GPT. A few years later, Keller Jordan forked off the Modded NanoGPT2 repository and launched the NanoGPT speedrun, which sought to improve NanoGPT’s training speed on a single 8xH100 node as much as possible.

Since then, Modded NanoGPT has gone from taking 45 minutes to train a model equivalent to GPT-2 Small to taking less than 90 seconds. Using a similar setup, Andrej Karpathy’s NanoGPT successor, NanoChat3, has reduced the time needed to train a GPT-2 XL level model from 168 hours to around 2 hours. These improvements have massively changed the economics of small-model training, making it possible to train a fully functional model for only around $50.

This post covers some of the most interesting and impactful upgrades used in both speedruns, with the goal of showing how model training got so fast. These improvements are presented in (approximate) order of importance.

Muon

Muon4 is an optimizer designed for the 2D parameter matrices of neural networks, and is used in both Modded NanoGPT and NanoChat. Notably, Muon has proven to be very effective even for larger models. For example, Moonlight5 estimates that Muon gives approximately 2x computational efficiency when compared to AdamW. For parameters that are not neural net parameter matrices, both NanoGPT and NanoChat use AdamW instead. Muon uses standard stochastic gradient descent with momentum, but orthogonalizes the update matrix using a single Newton-Schulz iteration. Why does this help so much? The original Muon blog post4 conjectures that

The updates produced by both SGD-momentum and Adam for the 2D parameters in transformer-based neural networks typically have very high condition number. That is, they are almost low-rank matrices, with the updates for all neurons being dominated by just a few directions. We speculate that orthogonalization effectively increases the scale of other “rare directions” which have small magnitude in the update but are nevertheless important for learning.

Modded NanoGPT and NanoChat use an efficient distributed version of Muon that distributes the Newton-Schulz iteration over multiple GPUs6.

Cautious Weight Decay

Cautious weight decay7 only applies weight decay when the update and the weight have the same sign ($\text{update} \times \text{weight} > 0$). The intuition for this is that if the signs are different, then the update is already pulling the weight back towards zero. Both Modded NanoGPT and NanoChat use cautious weight decay. One slight difference between the two is that Modded NanoGPT uses cautious weight decay for Adam as well, while NanoChat has no weight decay for Adam.

Momentum Warmup

Both Modded NanoGPT and NanoChat gradually increase the momentum used for Muon from 0.85 to 0.95. The intuition for this is that the loss landscape changes more rapidly early on, so lower momentum is desirable8.

Attention

This section covers various improvements to the attention mechanism, mainly along two lines:

- Moving to more optimized implementations of the attention computation

- Alterations to the size of the attention window

FlashAttention

The FlashAttention series of optimized attention implementations gives a major speedup to attention computations9 10 11. Both Modded NanoGPT and NanoChat use FlashAttention 3.

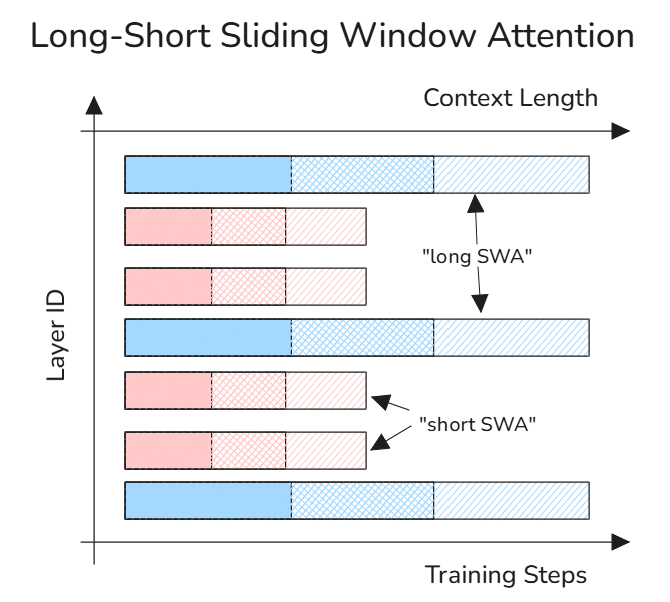

Sliding Window Attention

Both Modded NanoGPT and NanoChat use a short-long pattern for attention windows, where there are several short-window attention layers, followed by one long-window layer. Both Modded NanoGPT and NanoChat use a repeating SSSL pattern, where there are three short windows followed by a long window that is twice the length. However, the final layer is forced to always be a long window. This pattern is slightly altered for Modded NanoGPT, due to there being only 11 layers, so Modded NanoGPT only uses long-window attention on layers 4 and 11.

A similar method of alternating short and long attention windows was used in GPT-312.

Attention Window Warmup

Modded NanoGPT uses an attention window schedule, where the size of the attention window is gradually increased13. One disadvantage of changing the window size when using FlashAttention 3 is that each change requires some recompilation. Due to this, Modded NanoGPT increases the window size in a few large steps throughout training.

ClimbMix

While Modded NanoGPT does not allow changing the training data, NanoChat has experimented with several different training datasets, eventually settling on Nemotron-ClimbMix14. ClimbMix was created through the following procedure:

- Begin with data from:

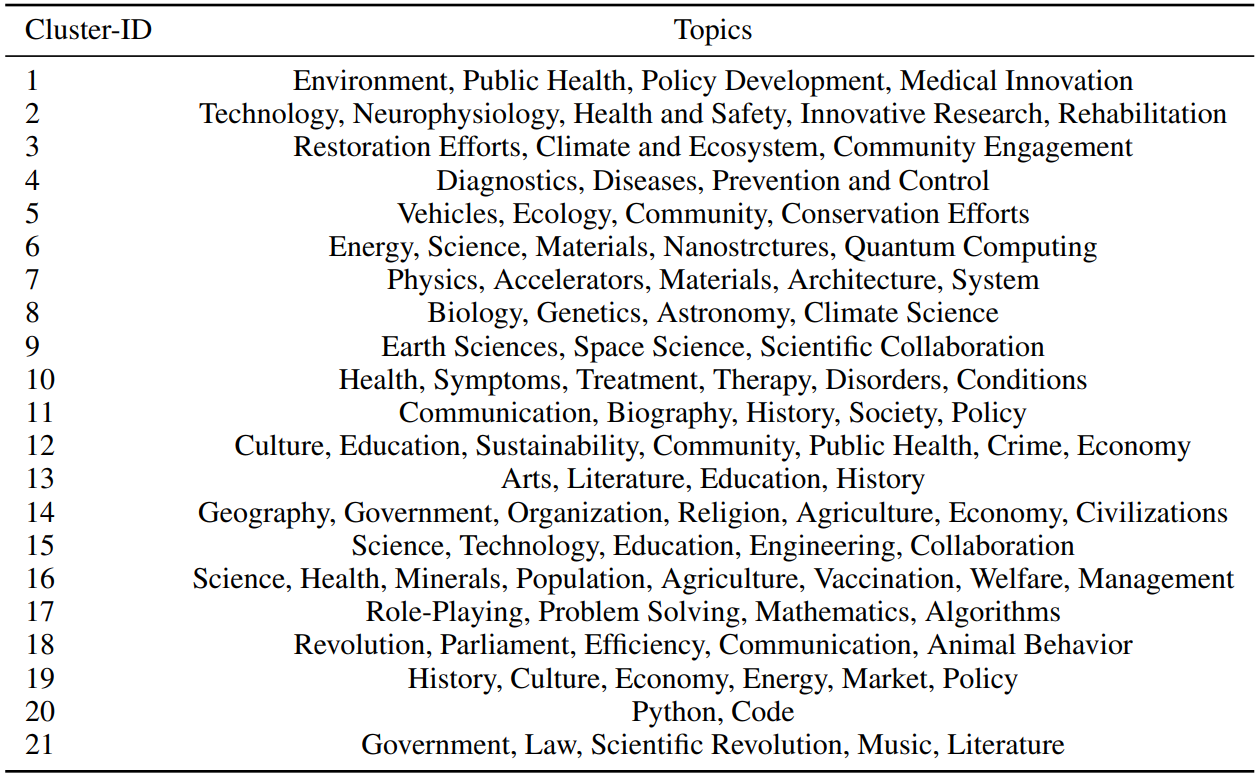

- Cluster the data, creating 21 semantic clusters that cover a variety of different topics

- Using a small model, learn the optimal data mixture for performance on downstream tasks

This results in a high-quality dataset that appropriately weights the frequency of various topics.

Modded NanoGPT uses FineWeb-Edu, a deduplicated and filtered selection of Common Crawl that was further filtered for data with high educational quality.

Architectural Modernizations

This section includes architectural modifications that are already relatively well-known, and are implemented in both Modded NanoGPT and NanoChat with minimal adjustments. Additionally, many of these adjustments are fairly standard in modern open-source LLMs. In that sense, the modifications in this section can be considered to be bringing Modded NanoGPT and NanoChat up to speed with modern Transformer implementations.

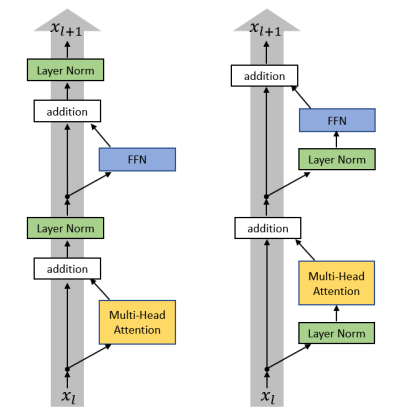

Pre-Norm

Pre-norm20 moves the normalization blocks out of the residual stream, placing them at the start of each sub-block (attention / MLP). This controls the gradient norm and increases training stability. This was also used in GPT-221, along with many other models.

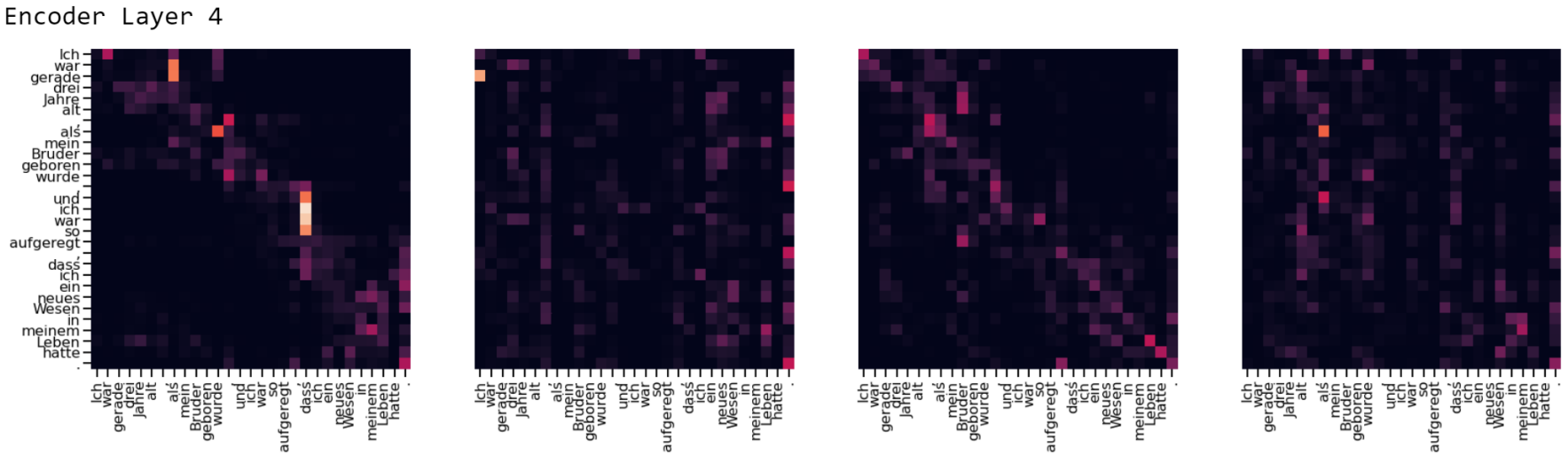

QKNorm

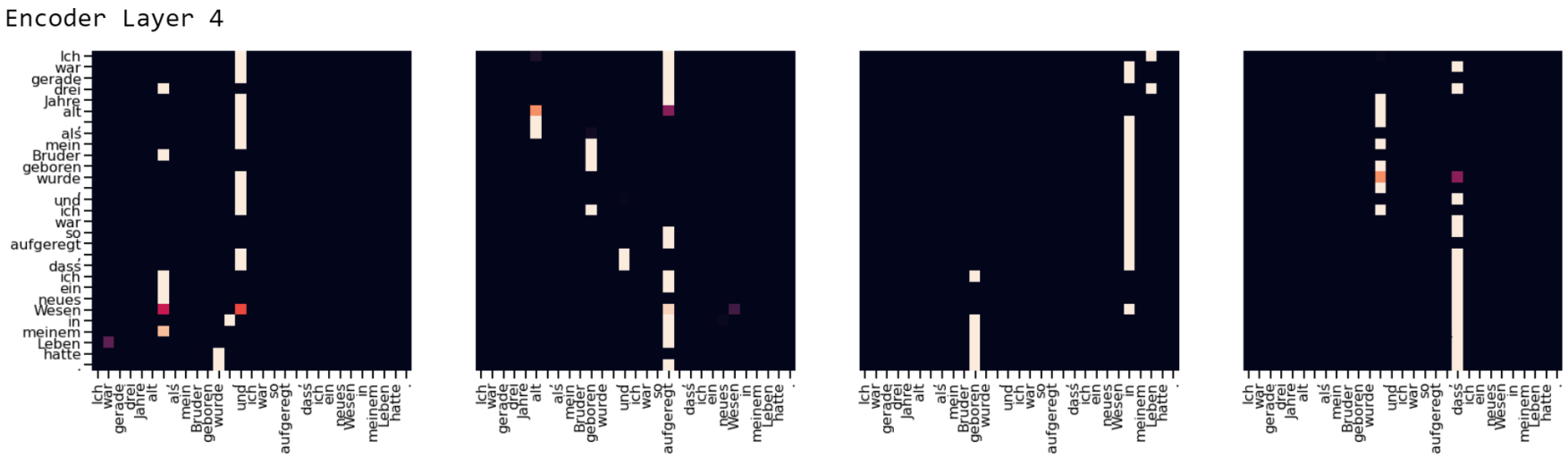

QKNorm22 normalizes each query vector $q_i$ and key vector $k_j$ after splitting along the head dimension and applying RoPE. While the original QKNorm paper uses the $\ell_2$-norm, both NanoChat and Modded NanoGPT apply RMSNorm instead. The primary benefit of this improvement is stopping the softmaxes in the attention computation from being easily saturated by large key or query vectors, allowing for more diverse attention patterns. This also improves training by stopping the attention gradients from growing extremely small. However, one disadvantage of this approach is that it can lead to the model not being able to sufficiently “focus” on important tokens, which has led to some later efforts to tweak the attention scale23.

RMSNorm

RMSNorm24 normalizes all input vectors $\mathbf{a}$ according to the formula

\[\overline{a}_i = \frac{a_i}{\text{RMS}(\mathbf{a})} g_i\]Where $a_i$ is the $i$-th value of $\mathbf{a}$, $g_i$ is a learnable gain parameter, and RMS is the root mean square of the relevant vector. Both Modded NanoGPT and NanoChat also drop the gain parameter $g_i$, yielding

\[\overline{a}_i = \frac{a_i}{\text{RMS}(\mathbf{a})}\]RMSNorm is a simpler and faster normalization method than LayerNorm25, which was used in the original Transformer.

RoPE

Rotary Position Embeddings26 adds positional information to the query and key vectors before performing the attention computation, rather than adding positional information when constructing the initial hidden state. More specifically, RoPE rotates pairs of dimensions in the query and key vectors based on each token’s position and the rotation speed for that dimension pair. RoPE helps models learn to include positional information without adding extra parameters.

ReLU²

The ReLU² activation function27 has been previously shown to strike a good balance between having high sparsity and good performance28. ReLU² is defined as

\[\text{ReLU}^2(x) = \max(0, x)^2\]Many modern LLMs instead use SwiGLU29 as their activation function. SwiGLU was tested in NanoChat on several scales, but consistently gave decreased performance.

Untied Embeddings

The original NanoGPT had tied embeddings, where the LM head matrix is the transpose of the input embedding matrix (as recommended by30). Both Modded NanoGPT and NanoChat untied these matrices, which is common in modern LLMs. One of the reasons why untying is likely to be beneficial in this case is because it increases the parameter count without causing a corresponding increase in the number of FLOPs per pass.

As a side note, tied embeddings can cause some unexpected issues (see Neel Nanda on the SolidGoldMagikarp token for one interesting example31).

Skip Connections & Value Embeddings

Value Embeddings

Value residual learning32 proposes modifying the computed value matrix $V_n$ on layer $n$ according to the formula

\[V'_n = \lambda_{n,1} \cdot V_n + \lambda_{n,2} \cdot V_1\]This is essentially mixing together the values on layer $n$ with the values on layer 1, allowing access to the computed values from lower layers. A variant of this was originally implemented in Modded NanoGPT as

\[V'_n = (1 - \lambda_{n}) \cdot V_n + \lambda_{n} \cdot V_1\]where $\lambda_{n}$ is a learnable parameter for each layer33.

Later, this formula was changed to mix $V_n$ and $VE_n$, where $VE_n$ are learnable value embeddings for the given layer34. More specifically, each layer is given an embedding matrix, which is then used to get layerwise value embeddings for each token, $VE_n$. The primary motivation for this change was to provide token-specific features to the attention values. This change replaces the original value residual learning formula with

\[V'_n = (1 - \lambda_{n}) \cdot V_n + \lambda_{n} \cdot VE_n\]Both Modded NanoGPT and NanoChat use value embeddings. However, they structure them slightly differently:

- NanoChat adds value embeddings to every other layer

- Modded NanoGPT shares value embeddings in a U-Net style pattern35 36. For example, in the 12 layer architecture used at the time, layers 1 and 12 would share the same value embeddings, layers 2 and 11 would have the same value embeddings, etc.

Value embeddings seem to be particularly helpful for model speedrunning because they add a large number of parameters without causing a correspondingly large increase in the number of FLOPs per token.

Hidden State Residual Connections

Modded NanoGPT also adds skip connections for the hidden state33, updating the hidden state at the start of layer $n$ according to

\[X'_n = \lambda_{n,1} \cdot X_n + \lambda_{n,2} \cdot X_1\]Since $X_1$ is the hidden state at the start of the first layer, this is equivalent to mixing the current layer’s hidden state and the relevant token embeddings.

This was later modified to use a U-Net style architecture35, adding connections to previous hidden states37. This changed the hidden state residual formula to

\[X'_n = \lambda_{n,1} \cdot X_n + \lambda_{n,2} \cdot X_1 + \lambda_{n,3} \cdot X_k\]This change was only made for $n > \frac{\text{layers}}{2}$, with $k = \text{layers} - n + 1$. For example, with the 12 layer architecture used at the time, only layers 7 through 12 would use this updated formula. Layer 6 would feed into 7, 5 into 8, and so on, up to 1 into 12.

Logit Soft-Capping

Gemma 238 applies a soft cap to logits, using the following formula

\[\text{logits} \gets \text{soft_cap} \cdot \tanh\!\left(\frac{\text{logits}}{\text{soft_cap}}\right)\]This limits the range of the logits to a range of $[-\text{soft_cap}, +\text{soft_cap}]$. One intuition for why this is helpful is that it limits the model’s confidence; for small models, it is less likely that they will be completely certain of the next token, so capping the logits stops them from expressing 100% certainty. This also helps with vanishing gradient issues.

Both Modded NanoGPT and NanoChat implement this soft cap with $\text{soft_cap} = 15$. However, they only use the soft cap for the LM head, while Gemma 2 applies it to the attention logits as well. It is likely that the optimal value of the softcap would be larger for larger models, since the ability to express increased confidence would become more useful.

Low Precision

Both Modded NanoGPT and NanoChat have attempted to reduce the precision of their models, but in different ways.

Modded NanoGPT uses FP8 for the LM head only. This was also tested in NanoChat, but did not give a significant benefit.

Instead, NanoChat uses FP8 for all linear layers, which gives a speedup of approximately 17% tokens per second during training, but takes more tokens to reach the same validation loss, resulting in the speedup being smaller overall (~5% speedup). This seems to give greater benefits for larger models, as testing full FP8 on smaller models made them slower overall. Full FP8 is most effective when using tensorwise scaling, rather than rowwise scaling.

Miscellaneous Improvements

Aligning to Multiples of 64

Both Modded NanoGPT and NanoChat inherit padded embeddings from the original NanoGPT. While the original NanoGPT had a vocabulary size of 50,257, padding to the next multiple of 64 (50,304) gave a major speedup39. In general, it is important to make sure that matrix dimensions are multiples of a sufficiently large power of 2 (16, 32, 64, 128, etc).

Zero-Initialized Output Layers

In appendix D.2, Tensor Programs V 40 suggests initializing output layers as zero, which makes the optimal hyperparameters for different model sizes match more closely. Both Modded NanoGPT and NanoChat follow this recommendation by initializing the final attention projection layer as zero and initializing the output layer of all MLPs as zero. Interestingly, this gives a speedup on Modded NanoGPT, despite Tensor Programs V only recommending it as a way to improve hyperparameter transfer, and simply noting that “we do not find this modification to be detrimental to performance”.

Further Reading

Some other writeups and resources that may be of use:

- Andrej Karpathy’s post Beating GPT-2 for <<$100: the nanochat journey 41 covers many of his initial optimizations for NanoChat and has a list of what worked and what didn’t work.

- Andrej Karpathy’s NanoChat experiment log42 contains many useful details on what kind of optimizations were tested, and what the results were. This was the primary source for most of the NanoChat optimization details.

- The Modded NanoGPT README (primarily maintained by Keller Jordan and Larry Dial)2 covers the various speedrun world records and their associated improvements.

- Larry Dial’s writeup How the NanoGPT Speedrun WR dropped by 20% in 3 months 43 covers many of the more recent modifications to Modded NanoGPT (roughly covering the July–October range).

- Varun Srivastava’s writeup Muon in Modded NanoGPT 44 covers many of the improvements made to Muon for the Modded NanoGPT speedrun.

If you found this post useful, please cite it as:

@article{conway2026,

title = {A Field Guide to NanoGPT Speedrun Optimizations},

author = {Evan Conway},

year = {2026},

month = {Mar},

url = {https://evanjayconway.com/posts/2026/nanogpt-improvements/}

}

References

-

Jordan et al 2024, modded-nanogpt README ↩ ↩2

-

Karpathy 2025, nanochat: The best ChatGPT that $100 can buy ↩

-

Jordan et al 2024, Muon: An optimizer for hidden layers in neural networks ↩ ↩2

-

Liu et al 2025, Muon is Scalable for LLM Training ↩

-

Chen et al 2025, Cautious Weight Decay ↩

-

Dao et al 2022, FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness ↩

-

Dao 2023, FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning ↩

-

Shah et al 2024, FlashAttention-3: Fast and Accurate Attention with Asynchrony and Low-precision ↩

-

Brown et al 2020, Language Models are Few-Shot Learners ↩

-

Diao et al 2025, Nemotron-CLIMB: CLustering-based Iterative Data Mixture Bootstrapping for Language Model Pre-training ↩

-

Su et al 2024, Nemotron-CC: Transforming Common Crawl into a Refined Long-Horizon Pretraining Dataset ↩

-

Allal et al 2024, SmolLM-Corpus ↩

-

Penedo et al 2024, The FineWeb Datasets: Decanting the Web for the Finest Text Data at Scale ↩

-

Kocetkov et al 2022, The Stack: 3 TB of permissively licensed source code ↩

-

Xiong et al 2020, On Layer Normalization in the Transformer Architecture ↩

-

Radford et al 2019, Language Models are Unsupervised Multitask Learners ↩

-

Henry et al 2020, Query-Key Normalization for Transformers ↩

-

Zhang & Sennrich 2019, Root Mean Square Layer Normalization ↩

-

Ba et al 2016, Layer Normalization ↩

-

Su et al 2023, RoFormer: Enhanced Transformer with Rotary Position Embedding ↩

-

So et al 2022, Primer: Searching for Efficient Transformers for Language Modeling ↩

-

Zhang et al 2024, ReLU² Wins: Discovering Efficient Activation Functions for Sparse LLMs ↩

-

Shazeer 2020, GLU Variants Improve Transformer ↩

-

Press & Wolf 2017, Using the Output Embedding to Improve Language Models ↩

-

Neel Nanda 2023, comment on “SolidGoldMagikarp (plus, prompt generation)” ↩

-

Zhou et al 2025, Value Residual Learning ↩

-

Ronneberger et al 2015, U-Net: Convolutional Networks for Biomedical Image Segmentation ↩ ↩2

-

Gemma Team 2024, Gemma 2: Improving Open Language Models at a Practical Size ↩

-

Yang et al 2022, Tensor Programs V: Tuning Large Neural Networks via Zero-Shot Hyperparameter Transfer ↩

-

Karpathy 2026, Beating GPT-2 for «$100: the nanochat journey ↩

-

Karpathy 2025, dev/LOG.md ↩

-

Dial 2025, How the NanoGPT Speedrun WR dropped by 20% in 3 months ↩

-

Srivastava 2025, Muon in Modded NanoGPT ↩